Fires produce electromagnetic effects that can be detected with the use of remote sensing techniques. Remote sensing as applied for fire prevention and management involves three sets of variables:

- the phases of a fire (pre-fire conditions, active fire, post-fire burn area);

- the sensors (optical, thermal infrared, lidar, radar, and micro-wave—which can be satellite-based, airborne, or ground-based);

- and the key variables to be estimated and mapped (vegetation type, topography, ground fuel, and weather, especially wind speed and direction).

Before a fire, remote sensing helps with risk analysis, mitigation, and prevention planning; once a fire starts, it helps to detect it and to respond and manage it; after a fire, it helps to map burnt areas. Each sensor has its strengths and weaknesses. The best solution is often a combination of two or more sensors and of space-based or airborne remote sensing with ground-based surveys.

KEY VARIABLES

“Fuel, topography, and weather are the three critical components to understanding how fires behave,” says David Buckley, Vice President for GIS Solutions at DTSGIS, which develops software solutions to support fire risk analysis and fire fighting. “There are detailed data for each of those and they are all combined to generate an output that will identify different conditions of threat, or probability of a fire occurring, and risk, which is the possibility of loss or harm.” See examples in Figures 1-2.

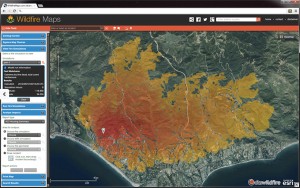

FIGURE 1.

DTSGIS provides a suite of tools shown on this Wildfire map that includes incident management, wildfire analysis, and public-facing tools to explore incidents.

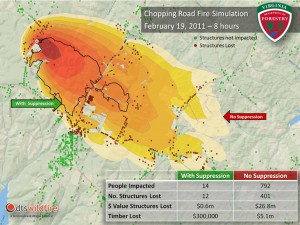

FIGURE 2.

Simulation of the Chopping Road fire, in Virginia, on February 19, 2011, courtesy of DTSGIS.

PREVENTING FIRES

“Remote sensing instruments can provide information on drought conditions, water content, fuel properties, and the state of the vegetation,” explains Tom Kampe, NEON’s Assistant Director for Remote Sensing. “Many of these systems also provide information on local weather.” Fuel type can be mapped, like classical vegetation, from optical or radar images. Whether wildfires will ignite, and how they will spread, depends also on fuel moisture. NEON is the National Science Foundation-sponsored National Ecological Observatory Network that incorporates both ground-based and aerial observation, with a view toward a 30-year consistent record to understand global change.

Different types of sensors can be used to estimate fuel moisture, says Brigitte Leblon, professor of remote sensing at the University of New Brunswick, Canada. Optical sensors deployed by NOAA (National Oceanic and Atmospheric Administration), such as the AVHRR (Advanced Very High Resolution Radiometer), or by the LANDSAT, MODIS (Moderate Resolution Imaging Spectroradiometer), or SPOT missions, she explains, enable the production of NDVI (Normalized Difference Vegetation Index) images that show how green the vegetation is; the less green it is, the more it is likely to burn.

“However, greenness changes could also be due to insect infestation, fungi, or something else, so, it is not a very good indicator of fuel moisture.”

“NOAA’s AVHRR and LANDSAT also have thermal infrared bands that allow measuring temperature increases that are produced when the surfaces are getting dryer. However, the image acquisition is limited to clear sky conditions. Radar sensors can be used to estimate fuel moisture because radar responses relate directly to the dielectric properties of the area and thus to its moisture.”

The U.S. federal government’s multi-agency LANDFIRE project (www.landfire.gov) provides data on pre-fire conditions, typically at the 30-meter level. All of these variables are used as inputs into fire danger predicting systems, such as the U.S. National Fire Danger Rating System (NFDRS), which also take into account other pre-fire conditions, such as proximity to roads and populated areas. Airborne lidar can provide additional data on vegetation types around specific assets or structures, such as homes or electric utility infrastructures.

FIGHTING FIRES

For fire fighting, early detection is essential. It currently relies on human observation, fixed optical cameras, and aerial surveys, but not on satellite sensors because of their long revisit time. For detection, Leblon explains, it is best to rely on a combination of optical and thermal sensors—the former because a fire produces visible smoke and the latter to acquire the hot spot. However, smoke is detectable only some time after a fire has started and often it is conducted along the surface and emerges far from where the fire started.

Once a fire is progressing, optical or radar sensors can be used to map the burn area. “Radar is better because you can see through the smoke,” says Leblon. “At this level, the two are very complementary. The best approach is with three sensors: optical, radar, and thermal infrared. NASA planned to have a satellite with all three, but it never happened. Thermal infra- red (which adds ground and canopy fire temperatures) is always coupled with optical. We can get the optical data easily, for free—for example, from MODIS, Landsat, or NOAA-AVHRR. All three also have a thermal band, so you often have the two together.” MODIS has become the standard data source for monitoring fires at regional to global scales and is used for environ- mental policy and decision making. See Figure 3.

FIGURE 3.

MODIS provides the “big picture” view with daily fire activity monitoring. Shown here is an area of the state of Washington, U.S. Courtesy of NASA.

Besides detection and mapping, incident commanders need to predict a fire’s behavior in order to decide where to allocate crews and which areas to evacuate. “For real-time fire fighting, they use many thermal sensors for capturing where the hot sparks of a fire are and where they are through the smoke. Many of the larger state and federal agencies regularly use thermal imagery during significant fire scenarios,” says Buckley.

Three other critical types of real-time data are wind speed, wind direction, and humidity, especially in narrow valleys that create their own microclimate. “Many incident management teams use mobile remote weather stations to capture more detailed weather information on what is occurring in those valleys,” says Buckley. “It’s all about how quickly you can get

accurate information.”

To observe fire behavior, microwave has the advantage that it is able to penetrate clouds and smoke, points out Michael Lefsky, an assistant professor in CSU’s Department of Forest, Rangeland, and Watershed Stewardship and the principal investigator on the High Park fire, which occurred in Colorado in June 2012.

TRADE-OFFS AND SYNERGIES

Satellite imagery provides extensive regional cover- age with zero disturbance of the area viewed and enables data acquisition in less accessible areas on a regular and cost-effective basis. “The advantage of optical sensors is that they are free of charge,” Leblon points out. “For example, the U.S. Geological Survey provides georeferenced Landsat images that can be used directly without doing any fancy image processing. The major problem is the cloud cover. When you have a big fire, you can have a lot of smoke and you cannot see anything. Airborne data is very costly. Lidar, for example, is airborne only and it costs $300 per square kilometer. Radar data, even if it is from a commercial satellite, is only $4 per square kilometer, because the satellite is already there and the big bill was paid by the country that built it.”

The tradeoffs between optical, thermal infrared, and microwave sensors have to do with the ability to detect the fires and with spatial resolution, or how fine-scale we can actually see things, explains Kampe. For example, he points out, NASA’s satellite-based MODIS has pixel size between 250 meters and one kilometer, which limits the ability to detect small fires. Because it is an infrared system, it does not transmit through clouds, which may make it impossible to estimate the extent of fires.

Like MODIS, ASTER, which was built by the Japanese and is being flown on a NASA satellite launched in 1999, operates in the short-wave infrared portion of the spectrum. However, it has a much smaller ground footprint of about 30 meters, so you can use it to detect small fires, Kampe explains. “The tradeoff there is that you don’t get global coverage in a day. It takes quite a number of days to be able to revisit the same point on Earth.”

Microwave sensors can penetrate through clouds and vegetation, enabling detection of a fire that may be occurring in a forest in the understory. However, they are expensive and have even less spatial resolution than MODIS.

Although radar is theoretically able to acquire imagery regardless of the weather conditions, the availability of this sensor is often limited because of the longer repeat cycle of the satellites. Additionally, while radar images have a finer spatial resolution than optical or thermal infrared images, they cover a smaller area. Thus, radar data is complementary to optical or thermal infrared data.

“Microwave or radar sensors are completely independent of weather conditions,” says Leblon. “You can even use them to acquire images at night. A microwave sensor is like a camera with a flash; an optical sensor is like a camera without a flash. If you have a camera without a flash, you are very limited as to the pictures that you can take.”

THE HIGH PARK WILDFIRE

The study of the High Park wildfire is a joint project of NEON and CSU, in collaboration with local, state, and federal agencies and land managers. It aims to provide critical data to the communities still dealing with major water quality, erosion, and ecosystem restoration issues in an area spanning more than 136 square miles. The project integrates airborne remote sensing data collected by NEON’s Airborne Observation Platform (AOP) with ground-based data from a targeted field campaign conducted by CSU researchers. It aims to help the scientific and management communities understand how pre-existing conditions influenced the behavior and severity of the fire and how the fire’s patterns will affect ecosystem recovery.

The aircraft-mounted instrumentation in the AOP includes a next-generation version of the Airborne Visible InfraRed Imaging Spectrometer (AVIRIS), a waveform lidar instrument, a high-resolution digital camera, and a dedicated GPS-IMU subsystem. “Being an airborne instrument, it has the capability to resolve features as small as a meter on the ground which enables us to detect individual trees and shrubs.” says Kampe. The combination of biochemical and structural information provided by spectroscopy and waveform LiDAR can be used to observe many features of land use and to observe and quantify pest and pathogen outbreaks, responses to disturbances like wildfire, and spatial patterns of erosion and vegetation recovery. See Figures 4-5.

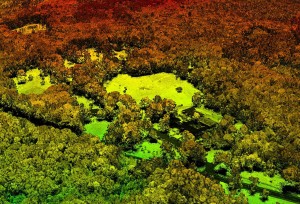

FIGURE 4.

A high-resolution LiDAR image from NEON air- borne observa- tion platform (AOP) test flights over Harvard Forest, MA, summer 2011. Credit: NEON.

FIGURE 5. (Located in the title feature)

Scientist Bryan Karpowicz moni- tors AOP instru- mentation on a laptop during a test flight. Photo by Keith Krause, NEON.

“We acquire the raw data and convert it into usable data for the scientific community,” says Kampe. “We do things like geolocation, radiometric calibration… In the High Park area, we weren’t trying to map the active fire; we were trying to map the entire burnt area and study the effects of the fire on vegetation and the ecology as a whole. In the long term, we hope to be able to look at post-fire recovery and the fire’s impact on the local region. The lidar data has been made available to Lefsky and his team and we anticipate that the imaging spectrometer data will be made available to him shortly.”

“We are obtaining quite a lot of information and can provide many different products,” says Kampe. “We can discriminate among ash, soil, and live or dead vegetation. Looking at post-fire recovery, the imaging spectrometer gives us the ability to map the regeneration of vegetation and also to discriminate between different types of vegetation—trees vs. shrubs vs. grass—even to the point where we can determine, in some cases, what types of species are growing.”

“We get a map of the forest canopy itself and the abil- ity to estimate such things as total biomass,” Kampe continues. “For post-fire areas, we use this to look at the state of the vegetation right after the fire. Then we sub- tract the vegetation and get a map of the bare ground. One of the things we look at, particularly in High Park, is the impact of erosion that may occur in areas that were burnt severely. Then we can also use the lidar data to map the growth in vegetation. The combination of the imaging spectrometer and the lidar makes our system and our study somewhat unique and should provide some very good information to foresters, land developers, and water resource managers.”

CSU’s team conducted the remainder of the data acquisition—the ground sampling and deriving the sci- ence from those products. See Figures 6-8. “The High Park fire was a target of opportunity,” says Lefsky. “It was near NEON headquarters and near CSU, where there’s a lot of bark ecology expertise, so we’re really targeting the High Park fire to look at some of these questions.”

“In the field,” says Lefsky, “we collected mostly con- ventional forest inventory parameters. That’s all being used for two purposes: to calibrate the lidar data, so that we can get estimates of how much biomass was there before the fire, and to assist us with mapping of burn severity, and presence or absence of bark beetle infestation prior to the fire. There is evidence that when burn severity is moderate, the dead trees that are left by bark beetle infestation can increase the severity and the speed at which fire burns through an area. On the other hand, when fire is really going, it doesn’t seem to make much of a difference. But that is an open research question and something that we will be examining during the study.”

Remote sensing has become an essential tool for pre- venting, fighting, and managing fires. By using optical, thermal infrared, lidar, radar, and microwave sensors— often in combination—to detect its electromagnetic effects, researchers and firefighters are able to analyze the risk of fire before it starts, detect it once it starts, track its development, and map the areas burned.

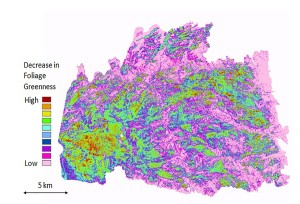

FIGURE 6.

Fire severity expressed as decrease in foliage green- ness (NDVI) between Sept. 17, 2009 and Oct. 17, 2012. Courtesy of Michael Lefsky, Center for Ecological Applications of Lidar, Department of Ecosystem Science and Sustainability, Colorado State University.

FIGURE 7.

Multispectral RapidEye imagery overlain over lidar digital terrain model derived from NEON lidar. Credit: Courtesy of Michael Lefsky, Center for Ecological Applications of Lidar, Department of Ecosystem Science and Sustainability, Colorado State University.

FIGURE 8.

Burn area inside the High Park fire scar, October 2012.

Photo by Jennifer Walton, NEON.